How to Craft and Adjust Your AI Policy Every Year (without wasting time)

AI in education is not a problem you solve once and move on. Every summer/fall, your staff returns with new tools they discovered over the summer. Your students arrive already using apps your IT team has never heard of. And somewhere in the news cycle, there's a fresh story about an AI-generated assignment or deep fake or something else that slipped past a school who didn't know what to look for.

An AI policy that sat in a shared drive since 2023 is probably not helping your school. It's a liability dressed up as a document.

The good news is that building a strong AI policy (and keeping it current) does not have to consume your leadership team's most precious hours. Here's how to do it right.

I Built A Free Tool That Helps

The most time-consuming part of building an AI policy from scratch is the thinking. What is your grade levels? How do your teachers currently think about AI? What has your community experienced?

Tools that can structure that thinking (guiding you through the key questions, then generating a tailored draft) compress the front end of the process significantly. The goal is never to skip the thinking, but to make sure the thinking produces something useful faster than starting from a blank page.

Whatever process you use, the output should be a document your teachers will actually open and a framework your students will actually understand. If it's sitting unread in a shared drive, it's not a policy. It's a formality.

I built a free tool to help you just like the 20 other schools and institutions I’ve helped in crafting and revising these policies. It’s called Policy Compass.

Policy Compass helps schools build and maintain AI policies through guided questions, AI-generated drafts tailored to your school's context, and audience-segmented documents your whole community can actually use.

Why Your AI Policy Has a Shelf Life

Most institutional policies are written to last. Acceptable use policies, harassment policies, emergency procedures. Most of these change slowly, if at all.

AI is the exception.

The tools and technology are evolving faster than any policy committee can convene. ChatGPT's capabilities in September are meaningfully different from what they were in January. New tools like AI tutors, writing assistants, grading aids, image generators etc all launch every month. What students can do with a phone and a free account changes constantly.

A policy written to address "AI chatbots" in 2023 may not even use the right vocabulary for what your students are encountering today. That's not a criticism of whoever wrote it (since my name is on a few of those as well!) it's just the nature of this particular technology moment.

Your AI policy needs an annual review built into the school calendar, that is not triggered by a crisis.

What a Useful AI Policy Actually Contains

Before you can review a policy, you need one worth reviewing. Many school AI policies fail not because they're outdated, but because they were never specific enough to guide actual behavior.

A policy that says "students must use AI responsibly" is not a policy. It's a wish.

Here's what the most effective school AI policies include.

1. A Statement of Purpose (that sounds like your school)

Why does your school have an AI policy at all? This isn't filler — it anchors every decision that follows. A school that frames AI as a tool for democratizing access to expertise will make different permission calls than one that frames it primarily as an academic integrity risk. Your philosophy should be explicit.

2. A Permission Framework with actual levels

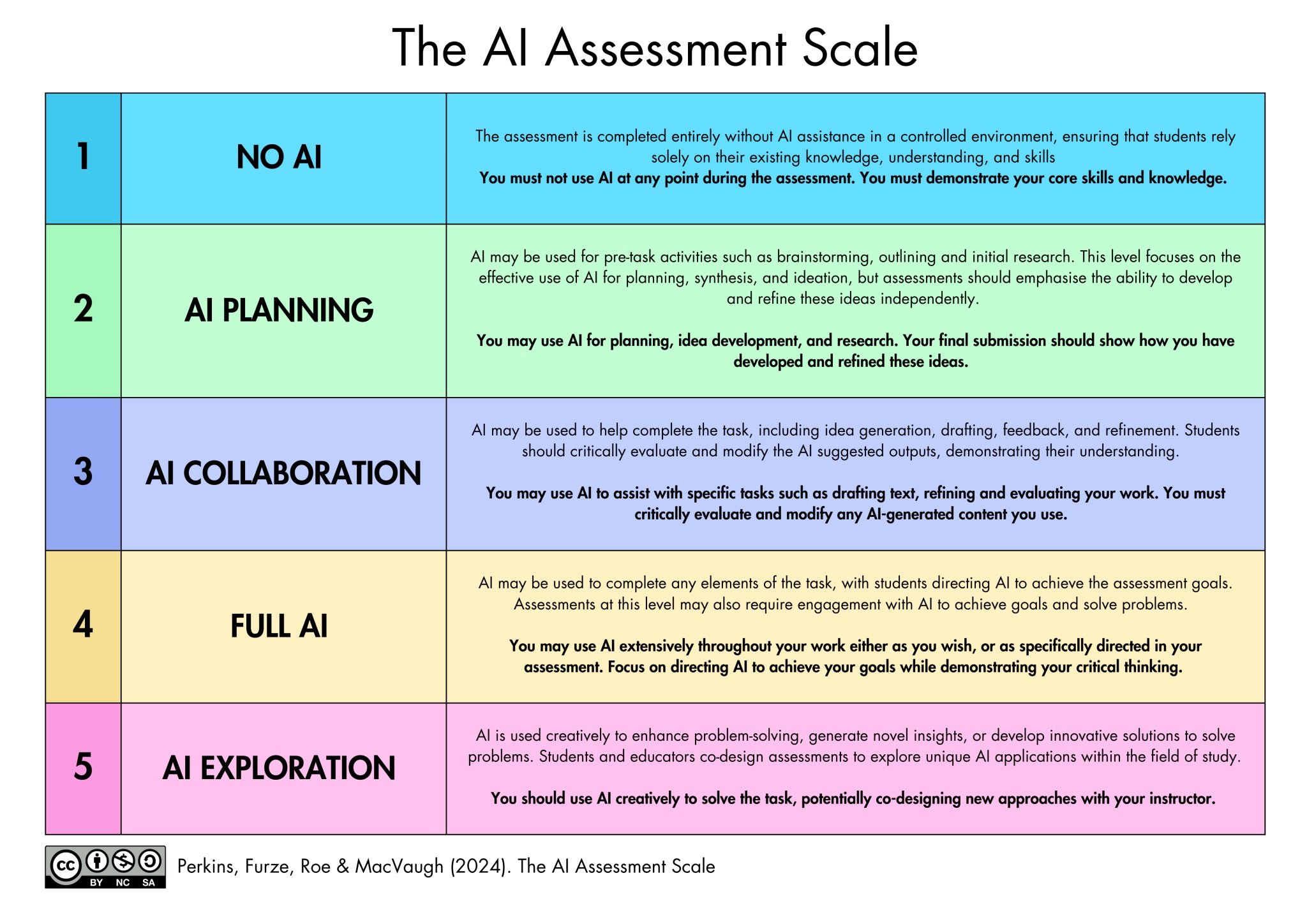

The most useful piece of any AI policy is a clear, visual permission system teachers can use at the assignment level. Not a blanket "AI is allowed" or "AI is not allowed", but a tiered framework that lets educators communicate expectations precisely.

Something like the AIAS Scale:

When a teacher can write "Level 2" on an assignment sheet, students know exactly what's permitted. Parents know what to expect. There's no ambiguity to exploit.

3. Audience-specific guidance

Your teachers, students, and families need different things from the same policy. A teacher wants to know what professional tools are approved for lesson planning and grading assistance. A student wants to know what happens if they submit AI-generated work. A parent wants to know what data their child's school is sharing with third-party AI providers.

A single policy document that tries to address all three audiences usually serves none of them well. Effective policies segment by audience — same underlying rules, different language and emphasis.

4. A named list of approved tools

"Approved AI tools may be used with teacher permission" is almost meaningless. Which tools? Approved by whom? For what purposes? A useful policy names specific tools, explains who has vetted them, what student data they collect, and under what circumstances they're appropriate.

Yes, this list will need updating. That's the point — it forces an annual conversation about what you're actually endorsing.

5. Academic integrity definitions that account for AI

Most existing academic integrity policies were written when plagiarism meant copying human-authored text. AI-generated content requires new definitions: What constitutes AI-assisted work versus AI-generated work? Does using AI for brainstorming count as a violation if the assignment prohibits it? How should students cite AI assistance?

These definitions need to be precise enough that a teacher can apply them consistently and a student can understand them without a lawyer.

The Annual Review Process (That Won't Eat Your Summer)

Here's the honest problem with AI policy review: it tends to be scheduled for strategic planning retreats, assigned to a committee that meets four times a year, and then quietly deprioritized when September arrives and everyone is managing orientation.

The solution is to make the review process genuinely lightweight — not a full rewrite, but a structured audit.

Step 1: Gather signal from the year (20 minutes)

Before you touch the document, collect three inputs:

Academic integrity incidents involving AI — How many were there? What patterns emerged? Were staff applying the policy consistently?

Teacher feedback — What questions came up repeatedly? Where did educators feel the policy left them without guidance?

New tools in use — What tools are students and teachers actually using that aren't in your current approved list?

This doesn't require a survey. It requires a 20-minute conversation with your academic integrity officer, your department heads, and your IT director.

Step 2: Update the permission framework (30 minutes)

Your permission levels are the most used part of the policy. Review them through one lens: Did they actually guide behavior this year?

If teachers weren't using them, ask why. If the levels were confusing, simplify them. If a new use case emerged — say, AI-generated images in art class — decide where it fits.

Step 3: Refresh the approved tools list (45 minutes)

This is your most time-sensitive update. Remove tools that were deprecated or flagged for privacy issues. Add tools your staff has been using informally and you've now assessed. Note any significant policy changes from existing tools (many AI providers update their terms of service and data practices annually).

If you don't have a formal vetting process yet, this is the year to create one — even a simple checklist covering data storage, student privacy compliance, and cost.

Step 4: Revise the audience guides (30 minutes each)

Your student-facing guide and family guide are the most likely to be read by the people who actually need to follow the policy. Update them to reflect any changes in the permission framework or approved tools, and revise any language that the year revealed was confusing.

This is also the moment to add examples. Concrete examples of "allowed" and "not allowed" do more work than any amount of policy language.

Step 5: Communicate the changes (not optional)

A policy that changes without anyone knowing it changed is worse than useless — it creates inconsistency. At the start of each year, your communication plan should include:

A brief all-staff presentation covering what changed and why

Student-facing communication (advisory, assembly, or digital distribution) that doesn't just share the document but explains the key points

A parent communication that flags significant changes from the previous year

What Makes This Sustainable

The schools that get AI policy right are not the ones with the longest documents or the most restrictive rules. They're the ones that treated policy as a living practice rather than a one-time project.

Start with your school's actual philosophy. A policy built around your values is easier to apply consistently than one built around fear of what might go wrong.

Write for your specific community. A K–8 independent school in a small town has different needs than a large urban high school. Generic policy templates are starting points, not finished products.

Involve the people who will use it. Teachers who helped shape the permission framework use it. Teachers who received it as a PDF from administration ignore it.

Build in the annual review before you need it. Add it to the academic calendar now, assign a clear owner, and keep the process short enough that it actually happens.

And check out Policy Compass: https://policycompass.org/